Community Tip - Want the oppurtunity to discuss enhancements to PTC products? Join a working group! X

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

Predicting Time To Failure with ThingWorx Analytics

Predicting time to failure (TTF) or remaining useful life (RUL) is a common need in IIOT world.

We are looking here at some ways to implement it.

We are going to use one of the Nasa dataset publicly available that simulates the Turbofan engine degradation (https://c3.nasa.gov/dashlink/resources/139/) .

The original dataset has got 26 features as below

Column 1 – asset id

Column 2 – cycle/time of sensor data collection

Column 3- 5 – operational setting

Column 6-26 – sensor measurement

In the training dataset the sensor measurement ends when the failure occurs.

Data Collection

Since the prediction model is based on historic data, the data collection is a critical point.

In some cases the data would have been already collected form the past and you need to make the best out of it. See the Data preparation chapter below.

In situation where you are collecting data, a few points are good to keep in mind, some may or may not apply depending on the type of data to be collected.

- More frequent (higher frequency) collection is usually better, especially for electronic measure.

- In situation where one or more specific sensor values are known to impact the TTF, it is good to take measure at different values of this sensor until the failure without artificially modifying the values.

For example, for a light bulb with normal working voltage of 1.5V, it is good to take some measure at let’s say 1V, 1.5V , 2V , 3V and 4V. But each time run till the failure. Do not start at 1.5V and switch to 4V after 1h. This would compromise what the model can learn. - More variation is better as it helps the prediction model to generalize. In the same example of voltage it is best to collect data for 1V, 1.5V , 2V , 3V and 4V rather than just 1.5V which would be the normal running condition.

This also depends on the use case, for example if we know for sure that voltage will always be between 1.45V and 1.55V, then we could focus only on data collection in this range. - Once the failure is reached, stop collecting data. We are indeed not interested in what happens after the failure. Collecting data after the failure will also lead to lower prediction model accuracy.

- Each failure run should be a separate cycle in the dataset. In other word from a metadata stand point, each failure run should be represented by a different ENTITY_ID.

TTF business need

Before going into data preparation and model creation we need to understand what information is important in term of TTF prediction for our business need.

There are several ways to conceive the TTF, for example:

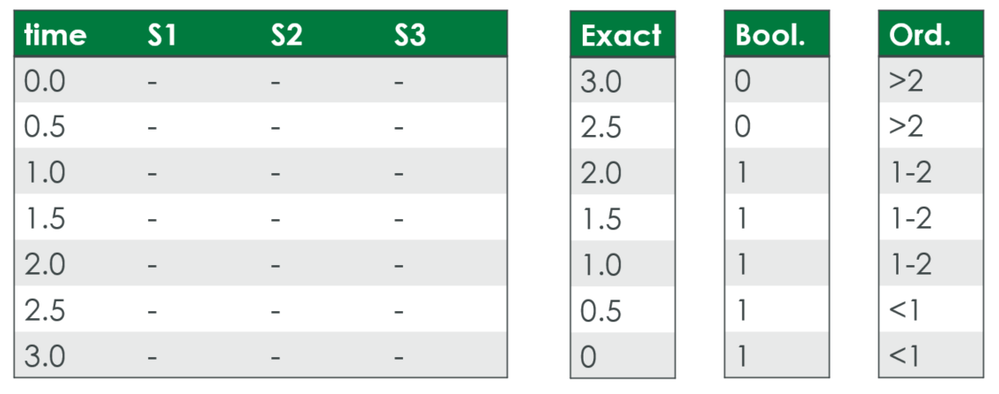

- Exact time value when failure might occur

- This is probably going to be the most challenging to predict

- However one should consider if it is really necessary. Indeed do we need to know that a failure might occur in 12 min as opposed to 14 min ? Very often knowing that the time to failures is less than X min, is what is important, not the exact time.

So the following options are often more appropriate.

- Threshold

- For some application knowing that a critical threshold is reached is all that is needed.

- In this case a Boolean goal, for example lessThan30min or healthy with yes/no values, can be used

- This is usually much easier than the exact value above.

- Range

- For other applications we may need to have a bit more insight and try to predict some ranges, for example: lessThan30min, 30to60min, 60to90min and moreThan90min

- In this case we will define an ordinal goal

- The caveat here is that currently ThingWorx Analytics Builder does not support ordinal goal, though ThingWorx Analytics Server does support it. So it only means that the model creation needs to be done through the API.

This is the option we will take with the NASA dataset.

The picture below shows the 3 different types of TTF listed above

Data Preparation

- General Feature engineering

Data Preparation is always a very important step for any machine learning work. It is important to present the data in the best suitable way for the algorithms to give the best results.

There are a lot of practices that can be used but beyond the scope of this post.

The Feature Engineering post gives some starting point on this.

There are also a lot of resources available on the Internet to get started, though the use of a data scientist may be necessary.

As an example, in the original NASA dataset we can see that a few features have a constant value therefore there are unlikely to impact the prediction and will be removed. This will allow to free computational resources and prevent confusion in the model. - Sensor data resampling

The data sampling across the different sensor should be uniform. In a real case scenario we may though have sensors data collected at different time interval. Data transformation/extrapolation should be done so that all sensor values are at the same frequency in the uploaded dataset. - TTF feature

Since we want to predict the time to failure, we do need a column in the dataset that represent this values for the data we have.

In a real case scenario we obviously cannot measure the time to failure, but we usually have sensor data up to the point of failure, which we can use to derived the TTF values.

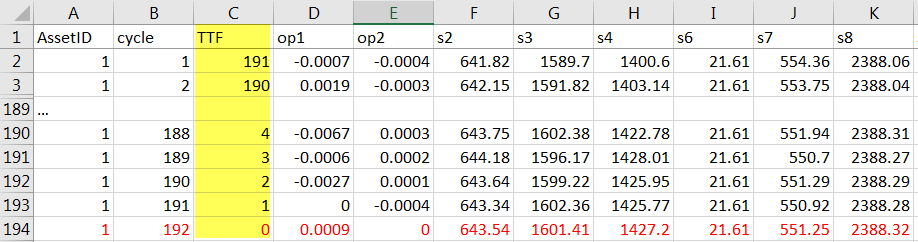

This is what happens in the NASA dataset, the last cycle corresponds to the time when the failure occurred. We can therefore derive a new feature TTF in this dataset. This will start at 0 for the last cycle when failure occurred, and will be incremented by 1 up to the very first measurement, as shown below:

Once this TTF column is defined, we may need to transform it further depending on the path we choose for TTF prediction, as described in the TTF business need chapter.

In the case of the NASA dataset we are choosing a range TTF with values of more100, 50to100, 10to50 and less10 to represent the number of remaining cycles till the predicted failure.

This is the information we need to predict in order to plan a suitable maintenance action.

Our transformed TTF column look as below:

Once the data in csv is ready, we need to create the json file to represent the metadata.

In the case of range TTF this will be defined as an ordinal goal as below (see attachment for the full matadata json file)

{

"fieldName": "TTF",

"values": ["less10",

"10to50",

"50to100",

"more100"],

"range": null,

"dataType": "STRING",

"opType": "ORDINAL",

"timeSamplingInterval": null,

"isStatic": false

}

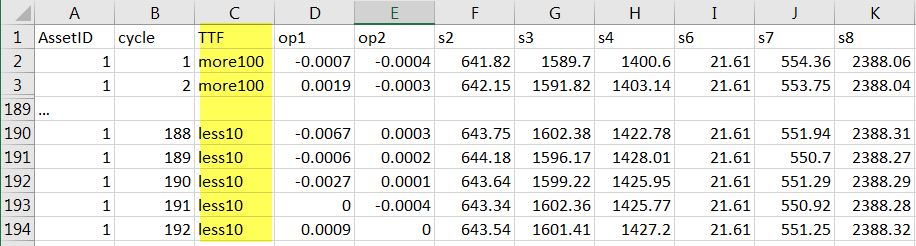

Model creation

Once the data is ready it can be uploaded into ThingWorx Analytics and work on the prediction model can start.

ThingWorx Analytics is designed to make machine learning easy and accessible to non data scientists, so this steps will be easier than when using other solutions.

However some trial and error are needed to refine the model which may also involve reworking the dataset.

Important considerations:

- When dealing with Time to failure prediction, it is usually needed to unset the Use Goal History in the Advanced parameters of the model creation wizard.

If using API, the equivalent is to set the virtualSensor parameter to true. - Tests with Redundancy Filter enabled should be done as this has shown to give better results.

- In a first attempt it is a good idea to keep lookback Size to 0. This indicates to ThingWorx Analytics to find the best lookback size between 2, 4, 8 and 16.

If you need a different value or know that a different value is better suited, you can change this value accordingly. However bear in mind the following:- Larger lookback size will lead to less data being available to train, since more data are needed to predict one goal.

- Larger lookback do lead to significant memory increase – See https://www.ptc.com/en/support/article?n=CS294545

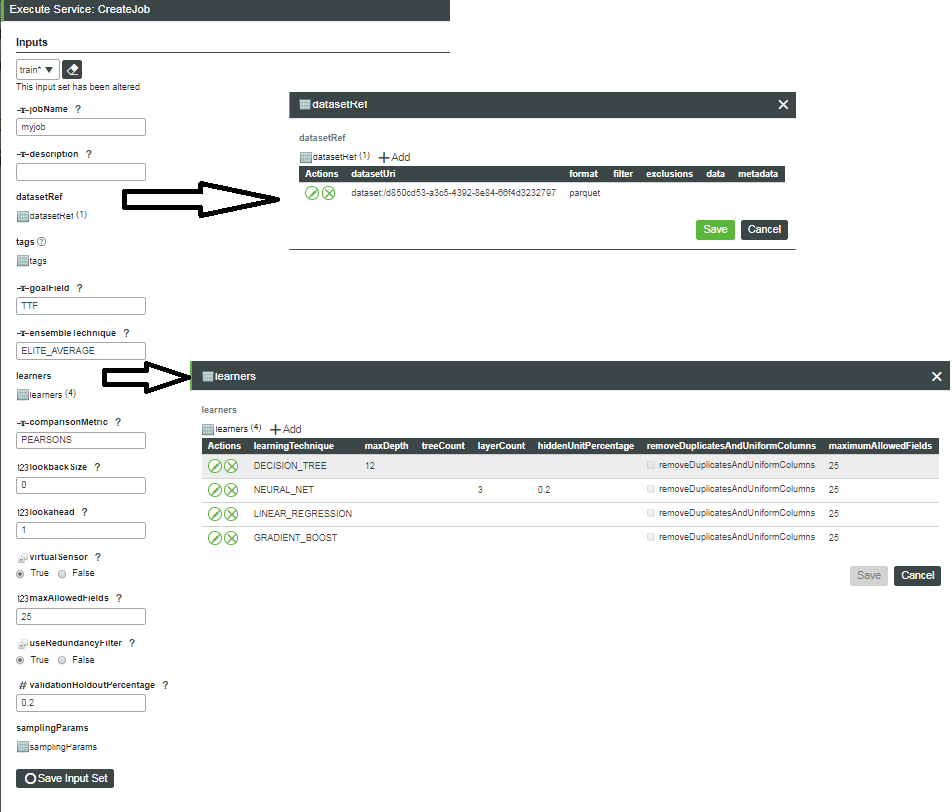

In the case of the NASA dataset, since we are using an ordinal goal, we need to execute it through API.

This can be done through mashup and services (see How to work with ordinal and categorical data in ThingWorx Analytics ? for an example) for a more productive way.

As a test the TrainingThing.CreateJob service can be called from the Composer directly, as shown below:

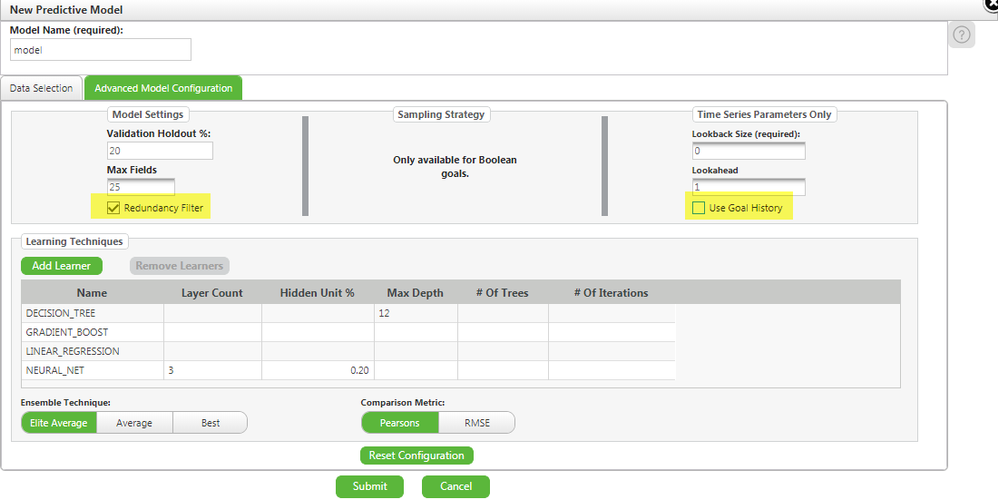

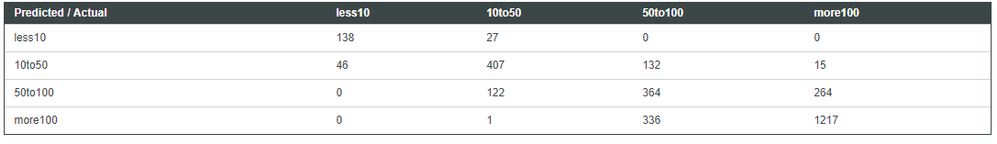

Once the model is created we can check some performance statistics in ThingWorx Analytics Builder or, in the case of ordinal goal, via the ValidationThing.RetrieveResults service. The parameter most relevant in the case of ordinal goal will be the confusion matrix.

Here is the confusion matrix I get

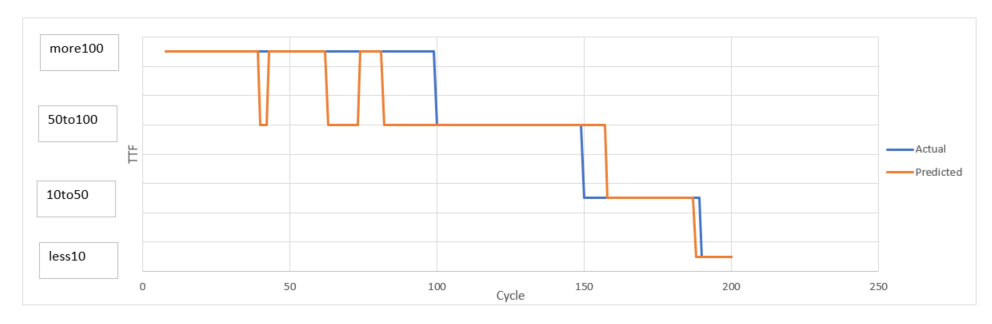

Another validation is to compute some PVA (Predicted Vs Actual) results for some validation data.

ThingWorx Analytics does validation automatically when using ThingWorx Analytics Builder and present some useful performance metrics and graph. In the case of ordinal goal, we can still get this automatic validation run (hence the above confusion matrix), but no PVA graph or data is available. This can be done manually if some data are kept aside and not passed to the training microservice. Once the model is completed, we can then score (using PredictionThing.RealTimeScore or BatchScore for ordinal goal, or Builder UI for other goal) this validation dataset and compare the prediction result with the actual value.

here is one example:

Depending on the business case this model can be deemed acceptable or may need rework, such as change the range values, change learners’ parameters, modify dataset …

There is certainly a fair amount of experimentation before creating the optimal model but hopefully this post does give some good starting points.

Resources:

Original Dataset attached as train_FD001-original.csv

Transformed dataset attached as train_FD001-TTF-transformed.csv

json metadata file for transformed dataset attached as train_FD001-ttford.json

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi there,

Very nice article. Thanks for the post.

Could you please help me to understand, how to create the graph shown below (predicted vs actual for ordinal goals). I could not find suitable service to draw it. I see RetrieveResult from PredictionThing gives predicted values in infotable, but could not find any service for actual values in info table to compare with.

Regards

Tushar

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @tushar

Thank you for your comment.

The PVA can be retrieved using the RetrievePVAs service of the ValidationThing. However this does not support categorical or ordinal goal, so if you are using a goal of this type there is no direct way.

I created the mentioned graph in a very manual way (there might be ways to automatized this but I did not investigate this as I just needed a quick graph for illustration purpose)

- I scored a validation set (for which I therefore knew the values for the goal)

- then compare the predicted value (result of the scoring job) with the actual value from the original validation set.

- the graph was simply created in Excel with the 2 series as input.

Hope this helps

Kind regards

Christophe

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi Christophe,

I was trying to run your example within TWA 8.5.4 and expecting this issue:

Error reading entity from input stream. Unrecognized field "code" (class com.thingworx.analytics.validation.service.dto.request.ValidationRequestResponse), not marked as ignorable (2 known properties: "jobId", "statusUri"]) at [Source: (org.glassfish.jersey.message.internal.ReaderInterceptorExecutor$UnCloseableInputStream); line: 1, column: 12] (through reference chain: com.thingworx.analytics.validation.service.dto.request.ValidationRequestResponse["code"]) For support cases please provide this log tag: 1c798fe8-b47e-45ce-a23f-b1eadf40715f

There is no "code" field in dataset though. If something got changed so much with 8.5.4 that prevents to run this example?

Thanks a lot,

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @anickolsky

I have just run a test in 8.5.4 but did not get any error, I was able to upload the dataset and build the model.

The only thing that was not working was the goalField, it should be TTFord and not TTF as on the picture, but apart from that the model ran without issue.

Can you clarify when exactly you get the error ?

Assuming this is during model creation, how do you build the model, do you use a mashup /service or do you use the CreateJob of TrainingThing manually ?

Can you download th eTWALogCollector form https://www.ptc.com/en/support/article/CS316782 and upload the resulting gz or zip file created.

Thanks

Christophe

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi Christophe,

I run it using direct service invocation from composer.

I will check things from your mail and get back to thread.

Many thanks!

Regards

Andrey

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi Christophe,

Runs perfect now.

Seems like using screenshot as an example I have mistakenly set removeDuplicateAndUniformColumns=true.

Is there a kind of common ways to display confusionMatrix result in a specialized widget? Bubble chart or what should be used to handle ordinal goals?

Many thanks,

Andrey

PS. I suppose there should be 4 ROC matrixes similar to used for boolean goals? This is what I can get now. Not similar to your output. And maybe you could help me to understand of how to explain summary on last screenshot?

And as I see the core of this example is a data transformation - the way you get single dataset file from many original data files.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @anickolsky

Good to hear it works for you now.

The very last screenshot is about the pva comparison, this is something I did manually in Excel, see my answer to Tushar dated 01-07-2019 04:49 AM above.

If you were more referring to the confusion matrix, the output you get is indeed the expected output from the service. You would need to write a custom service to format all in one matrix, this is what I did at the time but I don't seem to have that service anymore.

Hope this helps

Christophe

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

This is a very informative blog for me. I am very much benefited after reading this blog. Keep sharing.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi Christophe,

Could you please explain me what difference makes the ID? I undestand that if I use only the lines for the ID 1 for example I will have the same results since each ID should have a different model.

I could not understand the relation with the ID and results.

Many thanks!

Felipe Duarte

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi Felipe

I assume you are talking about the field AssetID with the opType ENTITY_ID.

Because we are using a timeseries dataset , those datasets do need to have additional fields compare to standard dataset. They need to have a field with opType TEMPORAL, which define the sequence of event, and the field with opType ENTITY_ID which define the different asset monitored or occurrences.

In the case of TTF prediction, each different ENTITY_ID will represent a different occurrence of the failure - it could be happening on different asset or always on the same asset.

The more occurrence you have the more likely the model created will be accurate .

For example you could simulate a TTF prediction for a light bulb.

You will then run multiple tests until the light bulb fail and collect relevant data on a regular interval (the interval will be the TEMPORAL field)

You will assign a different ENTITY_ID for each test run.

So each ENTITY_ID will represent a different test run where the light bulb started from new till failure.

As indicated above the more test run you do the more accurate the prediction model is likely to be because it will have more data to learn the behaviour of the light bulb.

Hope this help

Christophe