Community Tip - Your Friends List is a way to easily have access to the community members that you interact with the most! X

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Help with programming

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Help with programming

Rather than send the actual spreadsheet with all the raw data I have attached a pdf which shows some of the data.

From the matrix Z I have extracted the data that exceeds my reference value, so I have a matrix NewLo which shows all the anodic values (i.e. the ones that exceed my reference value).

The extracted data is not contiguous, since the values are not always anodic.

What I am trying to do is to determine the longest continuous period for which the values are anodic. This is Tmax and that value is used in an acceptance criterion formula. My logic is that if the row numbers are contiguous then start counting (i.e. from 305-308 = 4 seconds, or 523 - 525 = 3 seconds etc)

I am hoping that someone will be able to point me in the right direction.

Thank you.

Thanks.

- Labels:

-

Programming

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

It looks to me that column 0 of Z contains the data and column 1 is time.

So I am wondering why you look in in the whole matrix for values greater or smaller than E.ref.

Shouldn't you rather look just in the data column? I guess your Hi.count and Lo.count atre meaningless numbers.

What you have done with vlookup should give you correct results as it just looks at the very first column which is the data column in your case. So Lo is the vector of data values which are higher than -1 and Hi is the vector of values which are lower than -1. Any logic in the naming of variables?

From your plot it looks like all your data is positive an in the range from 0 to 270000. So I am surprised about the low and negative mean values. Whats going on here?

Especially the mean of Lo should be much higher, I would guess.

A further discrepancy I spotted is this: You read in 293719 lines from an Excel file and your text states that this is the data from 48 hours measured every second. But 293719 seconds are approx. 81.6 hours, not 48!??

Concerning your question: As far as I understood what you wrote, you are just looking for a program which looks for the longest run of consecutive numbers in the second column of NewLo. Is this correct?

What would the output of such a program be? Just the length of this run? Or the position (measured from ..?), or the consecutive data in a nx2 matrix?

It might be a good idea to attach the worksheet along with some dummy, cut down in size, but reasonable demo data yo we have something to play with.

BTW, I don't trust match, vlookup, etc. as those function always just work with the tolerance set by TOL and this can be troublesome and lead to unsuspected results, especially when working with units and/or very small values. This might not be a problem with gt or lt as needed in your sheet, but I would rather write small programs which will loop through your matrix and filter out the needed values via if statements.

A program which filters all values lower or higher than a given one could at the same time look for the maximum continuous sequence not filtered. Just an idea. Of course you could leave it the way you have and use a function which just loops through NewLo and looks for the values in the second time column.

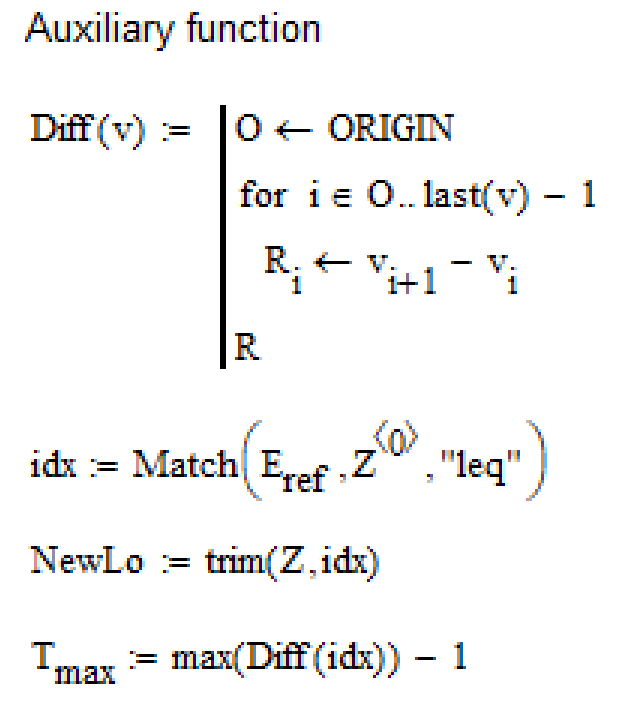

A suggestion in case you still trust match 😉

You can easily create the matrix NewLo which contains all data values greater than E.ref by using

NewLo := trim(Z, Match(E.ref,Z<0>,"leq"))

Z<0> denotes referencing column 0 of Z.

This includes no error check. The command will fail in two cases. Either if all data values are greater than E.ref or when all data value are greater than E.ref.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Just an additional thought. If you trust match, than there seems to be an easy way to get your T.max.

Match(E.ref,Z<0>,"leq") gives you a vector with indices of all values less or equal E.ref which corresponding rows then get deleted from the matrix. So the longest run in the remaining data is the greatest difference in the values of that index vector minus 1.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

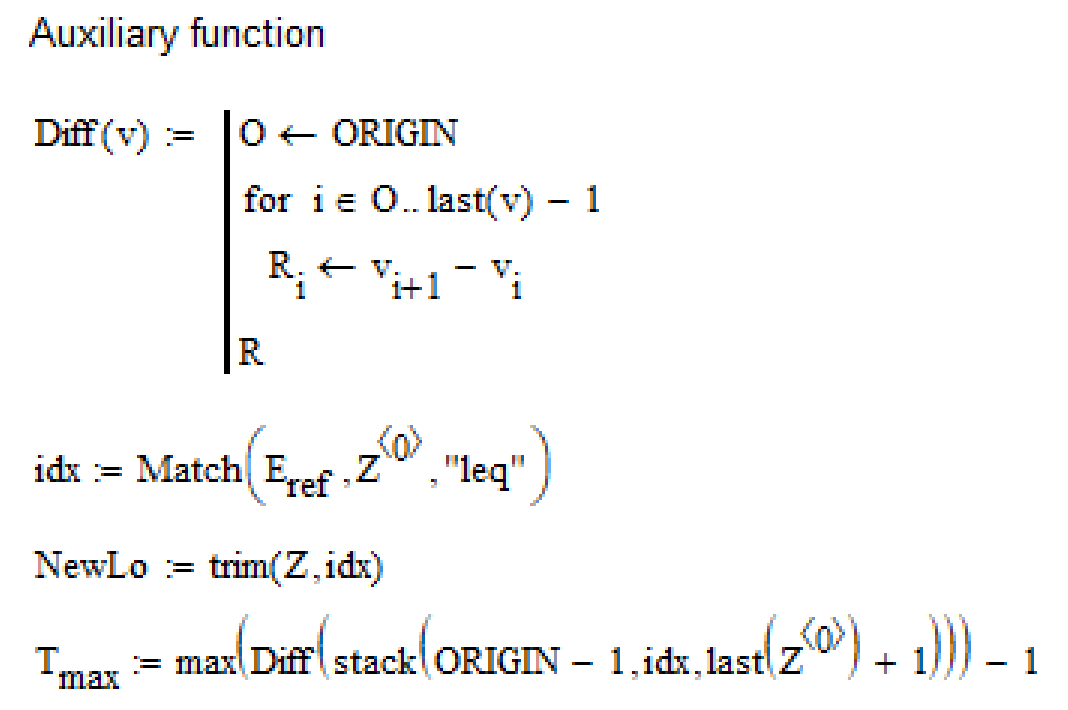

Small correction!

The above will miss the longest run if it occurs at the beginning or at the end of the data.

This will fix the problem:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Werner is correct--you need to sort on only the data column. Attached PDF might help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Dear Werner and Fred

Thank you both for your help. I finally managed to resolve the issue with help from Angela at Root Solutions.

The data was actually recorded at 0.5 seconds, and not 1 second as I thought. This accounts for the disparity in the counts. Also it does not exactly cover 48 hours but it does cover two days and two nights of recording. The "quiet" time in the middle is when the metro stopped running.

Your suggestions appear to me to be more efficient than my effort, but now that I have a working version that has been corroborated I will experiment with your suggestions.

Once again, thank you for your help.

I attach a copy of the full spreadsheet and my final working version.

Kind regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Worksheet is missing.

To attach more than one file you either have to highlight both files at once or you have to attach the first, click post, click the three points at the upper right and chose Edit Reply and now you are allowed to attach another file.

This procedure is uncomfortable, cumbersome, absolutely not understandable nowadays and so it fits perfectly the philosophy of PTC how software and usability should be 😞

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Apologies. Attached is a zip file with the revised version and the excel spreadsheet.

I am going to try your suggestions with the data, because I can see that my efforts are rather clumsy. It would be much neater if I could apply your suggestions. So I will have a go with that.

Kind Regards

Ken

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

No luck again.

The zip file is empty - its just 22 Bytes in size. No files inside.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Please reattach your Mathcad worksheet to the message.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hello Vladimir,

Do you want the Worksheet or the data file, or both?

I do not have much luck with uploading files but I am very happy to try again and also send you a link if you can confirm what you need.

Kind Regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hello Vladimir

As a first attempt I am attaching the worksheet and the spreadsheet with the data. If it does not work then I will send you a link.

Kind Regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Now the attachment is OK.

And what exactly is your question in the meantime?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hello Werner.

My question originally was how to extract the values that are more positive than -1 (Eref) and then to determine the longest continuous period where they are all more positive than Eref.

Then to sum all the periods more positive than Eref and more negative than Eref.

These values are then applied to the criterion formula.

Perhaps I did not do it in the most elegant way but I did eventually manage to do it with some help.

Kind regards