Estimating the delay between two signals with noise

Hi everyone!

This can be a very simple question for those of you that are expert in signal processing.

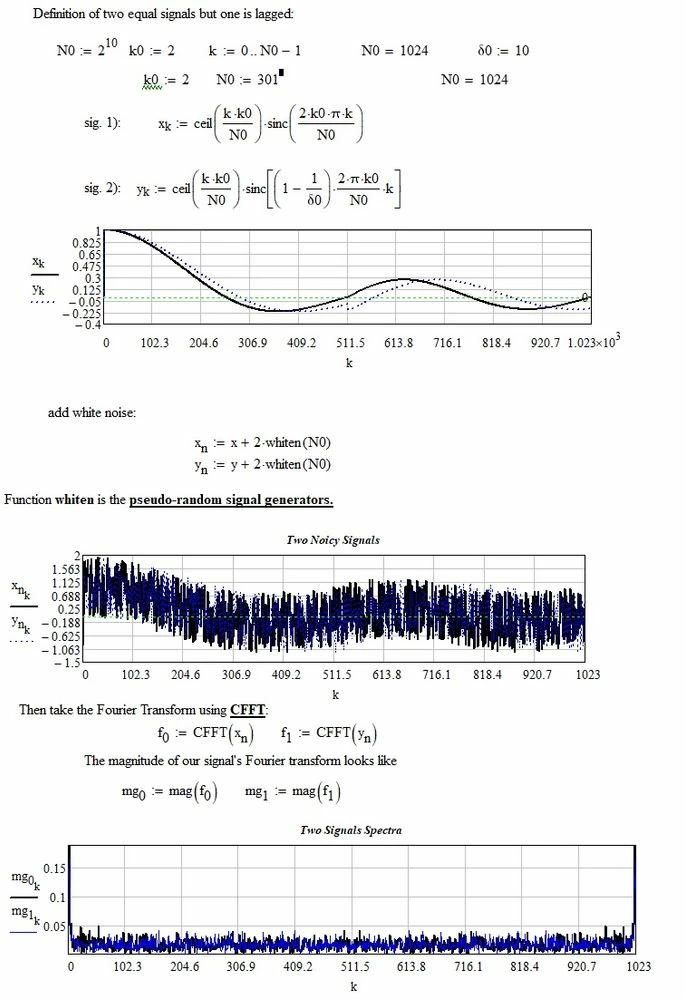

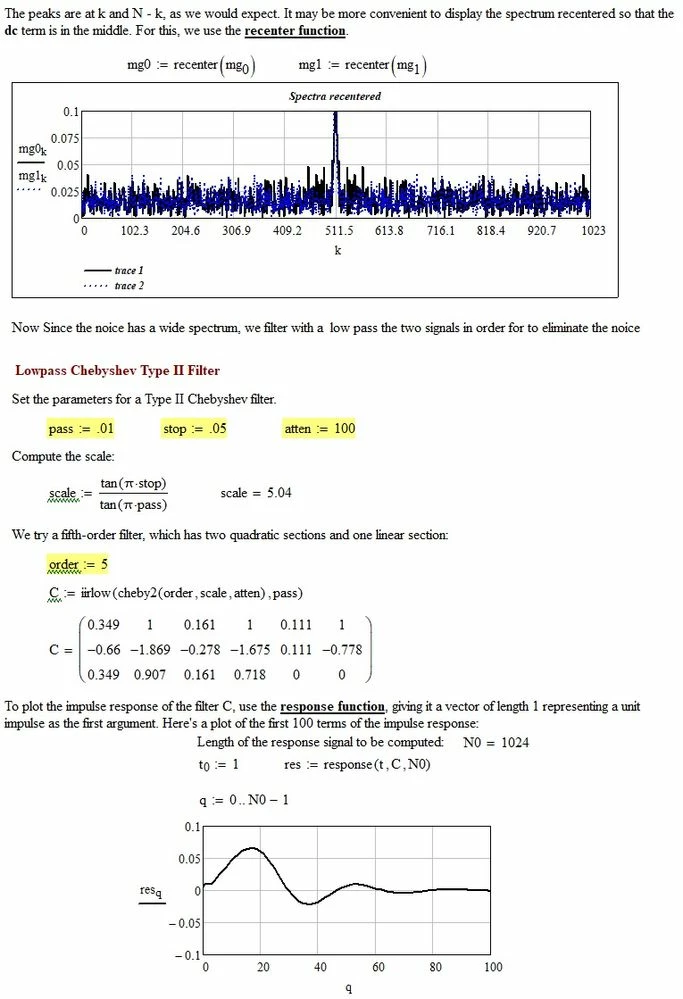

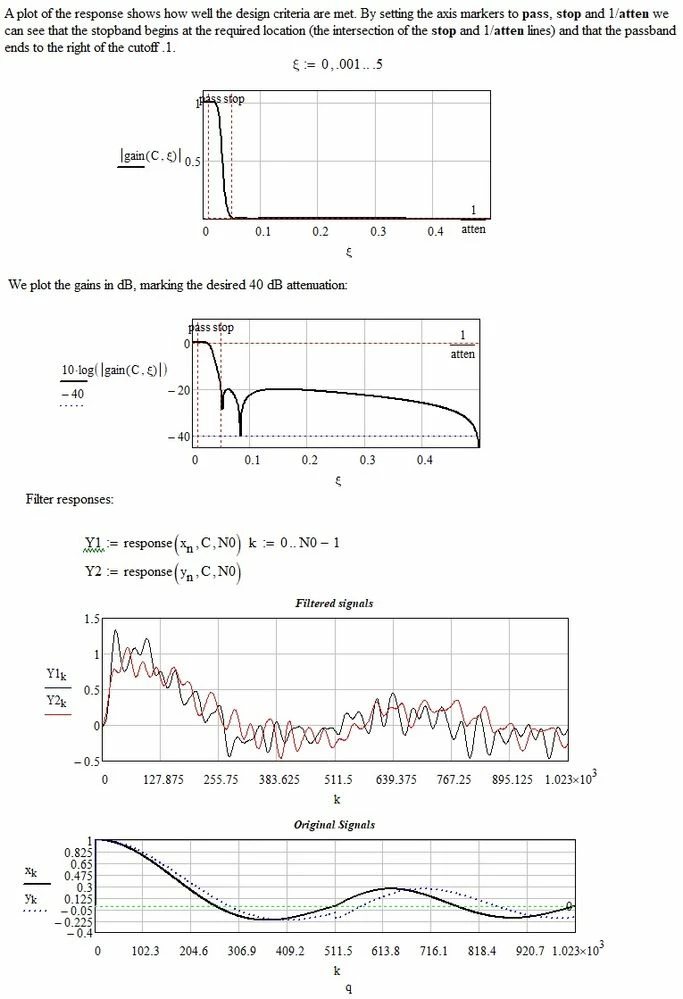

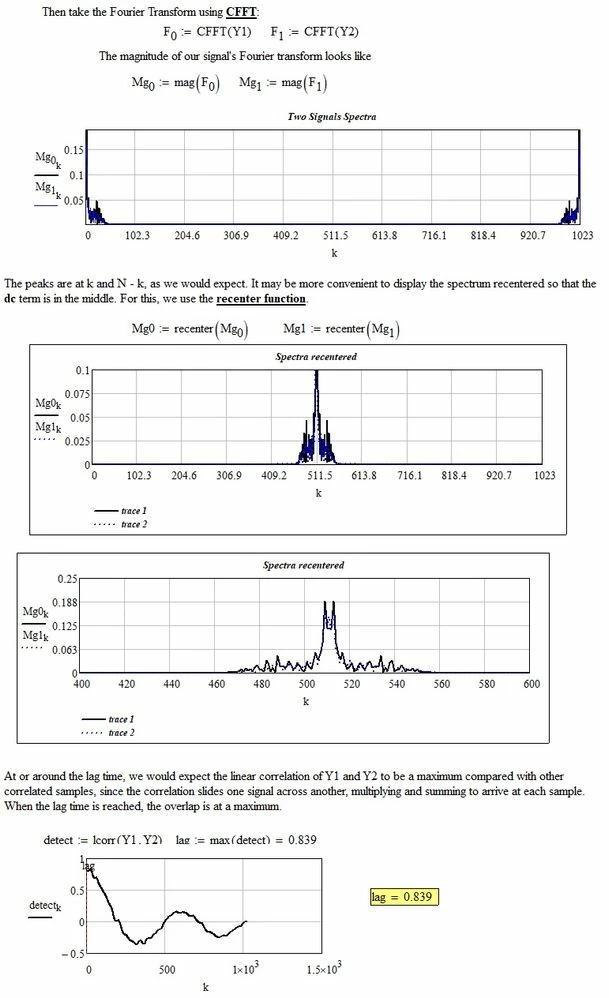

Suppose I have two time series y1(i) and y2(i) corresponding to two sensors, affected with noise and measurements errors. Known of course is also x(i), the times of the measurements. It can be assumed that the sampling frequency fs is constant, so that x(i)=(1/fs)*i.

Is there a simple way to estimate mathematically / statistically the time delay (if one exists) between the two measures? For example the "human eye" could see that one signal, if shifted a certain Delta-x, pretty well correlates the other one.

Is it possible, even if the two signals have different numerical magnitudes (say, the second signal is not only delayed but also weaker and maybe with a different constant term)

Somehow I believe this should be possible using the correl(y1,y2) function but some quick tests that I made do not seem to work.

Thanks a lot in advance!

Best regards

Claudio