Community Tip - You can Bookmark boards, posts or articles that you'd like to access again easily! X

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

Thundering Herd Scenarios in ThingWorx

Thundering Herd Scenarios in ThingWorx

Written by Jim Klink, Edited by Tori Firewind

Introduction

The thundering herd topic is quite vast, but it can be broken down into two main categories: the “data flood” and the “reconnect storm”. One category involves what happens to the business login (the “data flood” scenario) and affects both Factory and Connected Products use cases. The other category involves bringing many, many devices back online in a short time (the “reconnect storm” scenario), which largely influences Connected Products scenarios.

Think of Connected Products as a thundering stampede of many small buffalo, which then makes a Factory thundering herd scenario a stampede of a couple massive brontosaurus, much fewer in number, but still with lots of persisted data to send back in. This article focuses in on how to manage the “reconnect storm” scenario, by delaying the return of individual buffalo to reduce the intensity of the stampede. Find here the necessary insights on how to configure your ThingWorx edge applications to minimize the effect of a server down scenario.

The C-SDK will be used for examples, but the general principles will apply to any of the ThingWorx edge options (EMS, .Net SDK, Java SDK). This article also references the ExampleAgent application which is built using the C-SDK. The ExampleAgent is available for download as an attachment to this post. It offers an easily configurable edge solution for Windows and Linux that can be used for the following purposes:

- Foundation for rapid development of a robust custom edge application based on the ThingWorx C-SDK for use by customers and partners.

- Full featured, well documented, ‘C’ source code example of developing an application using the ThingWorx C-SDK.

A “local” issue is one which affects a single agent, a loss of connectivity due to hardware malfunction or local network issues. Local issues are quite common in the IoT world, and recovery usually isn’t too much of a challenge. A “global” issue occurs when many agents disconnect simultaneously, usually because there is an issue with the ThingWorx server itself (though the Load Balancer, Connection Server, or web hosting software could also be the source). Perhaps it is a scheduled software update, perhaps it is unexpected downtime due to issues, but either way, it’s important to consider how the fleet of agents will respond if ThingWorx suddenly becomes unavailable.

There are two broad issues to consider in a situation like this. One is maintaining the agent’s data so that it can be sent when the connection becomes available again. This can be done in the C-SDK using an offline file storage system, which includes properties, events, and services. Offline storage is configured in the twConfig.h file in the C SDK. The second issue the number of Agents seeking to reconnect to the server in a short period of time when the server is available.

Of course, if revenue is based on uptime, perhaps persisting data is less critical and can be lost, making things simple. However, in most cases, this data will need to be stored on the edge device until reconnect. Then, once the server comes back up, suddenly all of this data comes streaming in from all of the many edge devices simultaneously.

This flood of both data and reconnection of a multitude of agents can create what is called a “thundering herd” scenario, in which ThingWorx can become backlogged with data processing requests, data can be lost if the queues are overwhelmed, or worst-case, the Foundation server can become unresponsive once again. This is when outages become costly and drag on longer than necessary. Several factors can lead to a thundering herd scenario, including the number of agents in the fleet, the amount of stored data per agent, the amount of data ordinarily sent by these devices, which is sent side-by-side with the stored data upon reconnection, and how much processing occurs once all of this data is received on the Foundation server.

The easiest way to mitigate a potential thundering herd scenario, and this is considered a ThingWorx best practice as well, is to randomize the reconnection of devices. Each agent can be configured to delay itself by a random amount of time before attempting to reconnect after a loss of connectivity. This random delay then distributes the number of assets connecting at a time over a longer period, thus minimizing the impact of the reconnections on ThingWorx. There are several configuration settings that help in this regard.

Configuring the Herd (C-SDK)

The C-SDK is great at managing agent connectivity, having a lot of options for fine-tuning the connections. The web-socket connection is managed by the SDK layer of the edge device (which also manages the retry process). To review the source code for how connections are made, see the C-SDK file found here: src\api\twApi.c, specifically the function called twApi_Connect().

The ExampleAgent uses custom configuration files to manage this process from the application layer, a more robust and complete solution. Detailed here are the configuration options in the ExampleAgent attached to this post, most of which can be found in its ws_connection.json configuration file:

- connect_timeout is used throughout the C-SDK as the time to wait for a web-socket connection to be established (i.e. the ‘timeout’ value). This is the maximum delay for the socket to be established or to send and receive data. If it is established sooner, then a success code is returned. If a connection is not established in the configured timeout period, then an error is returned. Setting this value to 10 seconds is reasonable, for reference.

- connect_retries is the number of times the SDK will attempt to establish a connection before the twApi_Connect() function returns an error. Setting this to -1 will trigger the SDK to stay in the loop infinitely until a connection is established.

- connect_retry_interval is the delay between connection retries.

- max_connect_delay is used as a delay before even entering the loop, that which uses the connect_retries and connect_retry_interval parameters to establish the connection. The SDK function twAddConnectionDelay() is called, which delays by a random amount of time between 0 seconds and the value given by this parameter. This random delay is only used once per call to twApi_Connect(). This is therefore the parameter most critical to preventing thundering herd scenarios (as discussed above).

Configuring the SDK agents to reconnect in this way is critical, but there are also some drawbacks, namely that while the twApi_Connect() function is running, there is no clean way of shutting the agent down. Likewise, the agent only does ONE randomized delay per call of the twApi_Connect() function, meaning that if reconnection cannot occur immediately, it’s still possible for many agents to try to reconnect at once. Consider this when determining what values to assign to these parameters.

ExampleAgent Design

The ExampleAgent provided here is a fully implemented, configurable application, like the EMS in terms of functionality, but containing only simulated data. The data capture component is missing here and has to be custom developed. Attached alongside this source code is extensive documentation that explains how to get the application set up and configured. This isn’t meant to be used directly in a production environment.

Please note that the ExampleAgent is provided as-is; it is not an officially released product by PTC. This disclaimer includes the ExampleAgent source code, build process, documentation, deliverables as well as any ExampleAgent modifications to the official releases of the C-SDK or the SCM extension product. Full and sole responsibility for the use, deployment, reliability, and accuracy of any ExampleAgent related code, documentation, etc. falls to the user, and any use of the ExampleAgent is an implicit agreement with this disclaimer.

The ExampleAgent was developed by PTC sales and services to help in the Edge application development process. For assistance, support, or additional development, an authorized statement of work is needed. Please Note: PTC support is not aware of the existence of the ExampleAgent and cannot provide assistance.

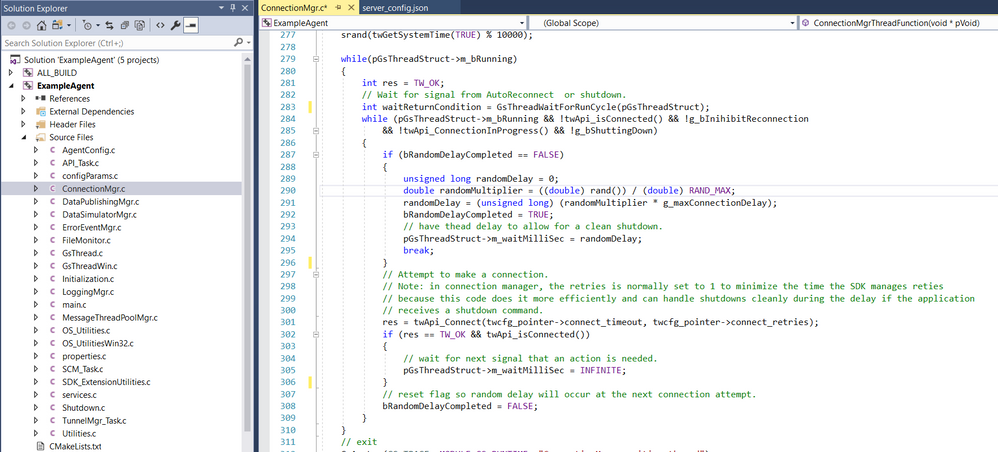

Because of the small downside to configuring the twApi_Connect() function directly as discussed above, there is alternative approach given here as well. The ExampleAgent module ConnectionMgr.c controls the calling of the twApi_Connect() on a dedicated connection thread. The ConnectionMgrThreadFunction() contains the source code necessary to understanding this process.

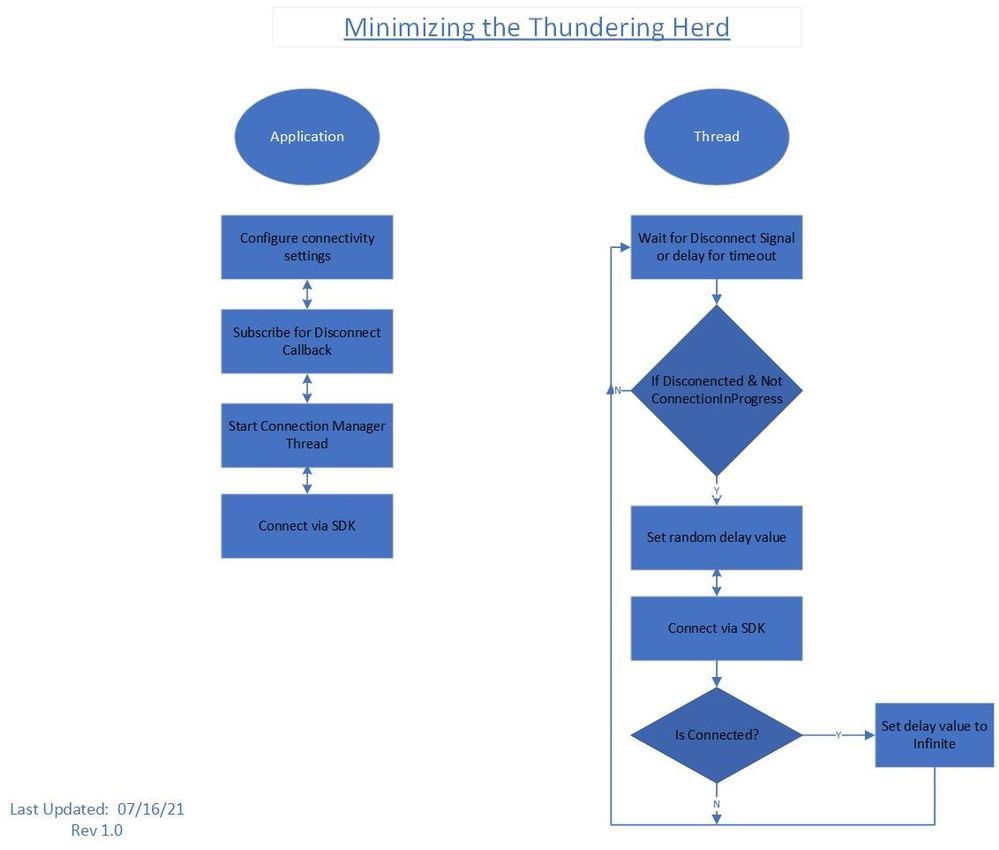

The ConnectionMgr.c workflow and source code visualization via Microsoft Visual Studio are in the diagrams below.

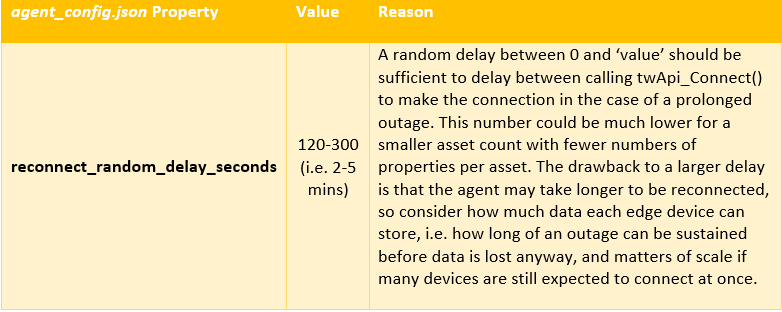

The ExampleAgent defines its own randomized delay to mitigate the thundering herd scenario while still deploying an edge system that responds to shut down requests cleanly. In this case, the randomized delay is configured by the parameter reconnect_random_delay_seconds in the agent_config.json file. Since the ConnectionMgrThreadFunction() controls the calling of twApi_Connect(), the ConnectionMgrThreadFunction() will delay the randomized value EVERY time before calling this reconnect function. A separate thread is created to call the reconnect function so that there are still resources available for data processing and to check for shutdown signals and other conditions.

Recommended Values

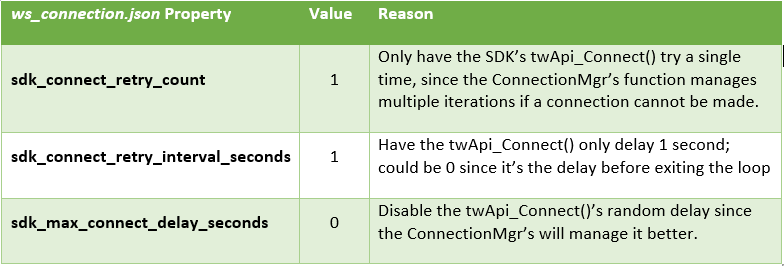

These recommendations are based around managing the reconnection process from the application layer. These may be different if the C-SDK is configured directly, but creating application layer management is recommended and provided in the ExampleAgent attached. The ExampleAgent is configured by default to simplify the SDK layer’s involvement.

These configuration options tell the SDK layer to try to connect just once, after just 1 second:

There is no official recommendation for the above values due to the fact that every use case is different and will require different fine tuning to work well.

Then this setting here handles the retry process from within the application layer of the ExampleAgent:

Conclusion

To reduce the chances of a thundering herd scenario, configure the fleet to reconnect after differing random delays. The larger the random delay times, the longer it takes for the fleet to come back online and fleet data to be received. While more complex ThingWorx deployment architectures (such as container-based deployments like Kubernetes or Thingworx High Availability (HA) clusters) can also help to address the increased peak load during a thundering herd event, randomized reconnect delays can still be an effective tool.