Community Tip - Learn all about the Community Ranking System, a fun gamification element of the PTC Community. X

- Community

- PLM

- Windchill Discussions

- i need your advice on data space management

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

i need your advice on data space management

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

i need your advice on data space management

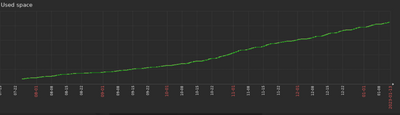

You can see the data area increase graph of my windchill Production server.

The curve you see here is constantly increasing at the level of terabytes and creates serious costs.

How do you manage the space here,

I keep all versions in the system, do you keep them too? Or do you have another recommendation? thanks.

- Labels:

-

Bus_System Administration

- Tags:

- server management

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Version might not be your issue but certainly iterations. You need to purge. Purge rules can be setup and run automatically. I have some queries that can help identify large file and context with the most purgeable items (see presentation from 2022 PTCUser). After you purge, you should also be running remove unreferenced files job on vaults to delete things that are not referenced in the database. When you delete items in Windchill, they do not immediately delete content, they just become unreferenced. This job will remove the files from the vaults for good.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi, where can one find the mentioned presentation from 2022 PTCUser?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

If I remember right, we were previously on an unsustainable data path due to publishing. This was several years ago, but I think we were publishing a step file for every check in. We turned that off, and now users can publish on demand only when needed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

You can configure STEP publishing and PDF to only upon release. That can cut it down which is what we do.