Community Tip - Did you get an answer that solved your problem? Please mark it as an Accepted Solution so others with the same problem can find the answer easily. X

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to calculate a minimum value for which 95% of future sampled values will be higher?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

How to calculate a minimum value for which 95% of future sampled values will be higher?

Greetings,

I have several sets of data for the tested strength of a material. The maximum number of data points in each data set is 5, so I really don't have a lot to work from. Also, I'm not as handy with statistics as I would like to be, so I'm throwing this to you guys. I need to take this data into a function and output a single value that represents the predicted safe lower limit of the data set for which 95% of all future tested samples will be higher. I'm basing this function on the Kolmogorov–Smirnov statistic and Student's T test.

The intention is to determine the safest strength of material based on tested data, but not be too conservative.

I wrote this to do what I intend, however I'm not 100% sure if what I'm doing is based on sound statistical thinking. Below is a screenshot of the function and attached is the worksheet. Please, play with it and inform me of your thoughts.

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- You set n to last(x), that makes n dependent on the actual value of ORIGIN. If ORIGIN=0, this sets n to the number of elements in x minus 1, if ORIGIN=1 it makes n be the number of elements in x, But ORIGIN can be set to an arbitrary value...I would set n to length(x), or length(x)-1 if that is what you need. Note that this ripples through because...

- You set df to be n-1, and use that to index into the t95 vector, using df-1, so you're taking the 2-but last element, is that intentional?

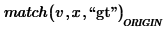

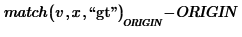

- Your internal function D(x,v) tries to find the number of elements in x whose value is below v. Have you looked at the built-in function match()? The expression:

Gives you the index of the first element in x that is above v. With x sorted (as you do now), this:

gives you the number of elements in x that are below v. Or an error if none of the values in x are above v, but I guess that your v will always be in range. And besides, you don't need this at al because...

- You use the function D() on a generated array generated by the rnorm random generator with given mean and stdev. However, you don't need to generate the random numbers. From given mean and stdev you can easily calculate the percentage of numbers that lie below a given value x with:

Hope this helps.

Success!

Luc

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- You set n to last(x), that makes n dependent on the actual value of ORIGIN. If ORIGIN=0, this sets n to the number of elements in x minus 1, if ORIGIN=1 it makes n be the number of elements in x, But ORIGIN can be set to an arbitrary value...I would set n to length(x), or length(x)-1 if that is what you need. Note that this ripples through because...

- You set df to be n-1, and use that to index into the t95 vector, using df-1, so you're taking the 2-but last element, is that intentional?

- Your internal function D(x,v) tries to find the number of elements in x whose value is below v. Have you looked at the built-in function match()? The expression:

Gives you the index of the first element in x that is above v. With x sorted (as you do now), this:

gives you the number of elements in x that are below v. Or an error if none of the values in x are above v, but I guess that your v will always be in range. And besides, you don't need this at al because...

- You use the function D() on a generated array generated by the rnorm random generator with given mean and stdev. However, you don't need to generate the random numbers. From given mean and stdev you can easily calculate the percentage of numbers that lie below a given value x with:

Hope this helps.

Success!

Luc

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Luc, you are saving my life right now. I wish I could give you more than 1 kudo.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Luc, I took your comments and modified the function as shown below. The function now sets ORIGIN = 0 internally, which will prevent this global from affecting the results. I took out the D function and used pnorm in my while loop. The function runs much, much faster!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

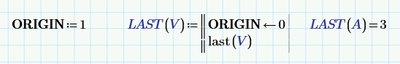

Don't (try to) set ORIGIN within a program.

1. It leads to confusion.

2. it doesn't work. Let me show:

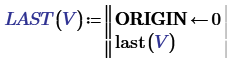

I define an array, with three elements.

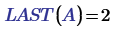

Normally ( when ORIGIN=0 ) the 9 would be at index 0 and the 7 at index 2, last(A) should be 2.

I write a program that determines the last index of its (array) argument, but which first sets the global value ORIGIN to 0.:

Now of course:

But look here what happens if I set ORIGIN to 1 before calling LAST:

I guess you didn't try to see what happens if you change ORIGIN outside of your program.

Besides, you didn't do it properly, because you set a variable by the name of

to 0 inside the program, that's not the global

which is labelled a system. (You didn't attach the worksheet, but I can tell because your ORIGIN is in italics).

Advice: If you want to know the size of a vector (how many elements are in there), use length.

If you need one less than the length, use length - 1, NOT last.

If you want your program to work independently of the value of ORIGIN, use ORIGIN (the one labelled 'system') in your program at appropriate place(s).

Success!

Luc

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Thanks. Dang, I thought there would be an easier way to foolproof against this concept of origin being different.