Solved

How to speed up Mathcad Prime for large data sets?

- February 25, 2023

- 1 reply

- 6898 views

Does anyone know how to speed up MCP for processing large data sets?

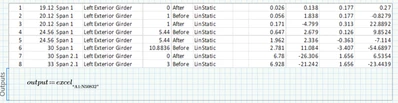

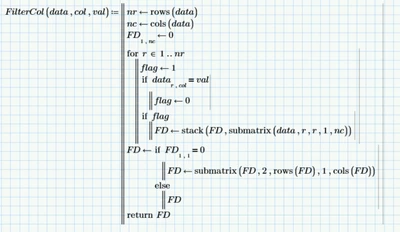

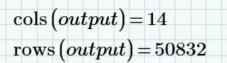

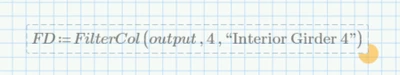

I am working with a 50832x14 size data set that is output in Excel from another program. I would prefer to filter, sort, and manipulate the data in MCP vs Excel. However, trying to execute a simple filter step in MCP on a data set this large has proven impossible. I can't get the function to complete (endless spinning wheel). Whereas I can complete this one step in Excel in about 1sec.

Does anyone know how to speed this up? Or any other tricks for processing large data sets in MCP? I would really, really prefer to avoid Excel but at this point, I'll have to use it.

Thanks!