Thingworx Cluster Down After MSSQL OS Updates

We have Thingworx 9.3.0 deployed in HA with three ThingWorx nodes, three Zookeeper nodes, and three Ignite nodes. Our database is MSSL 2019 with two nodes set up for failover.

Recently, we found our Thingworx cluster down after scheduled OS updates were made to the MSSQL server nodes. We're looking for some help in tuning our platform-settings and whatever else is needed to ensure the cluster is able to recover from temporary drops due to server maintenance.

Question: Do I just need to adjust "ClusteredModeSettings.ModelSyncTimeout" to the amount of time we expect it to take for our DB to return to availability?

Any other insights?

Investigation Findings:

This one repeats many times in Application log.

2023-02-27 00:33:13.594+0000 [L: WARN] [O: c.m.v.a.ThreadPoolAsynchronousRunner] [I: ] [U: ] [S: ] [P: thingworx2] [T: C3P0PooledConnectionPoolManager[identityToken->14wbh7taufjvcx41v83jrm|7e8297 7b]-AdminTaskTimer] com.mchange.v2.async.ThreadPoolAsynchronousRunner$DeadlockDetector@cb7a47d -- APPARENT DEADLOCK!!! Creating emergency threads for unassigned pending tasks!

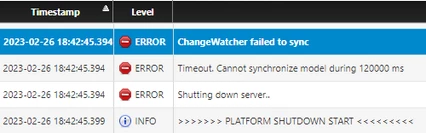

These errors seemed to define the beginning of cluster shutdown:

The timeout message about synchronization pointed me to the ClusteredModeSettings section of platform-settings where I found the ModelSyncTimeout set to 120000. The ClusteredModeSettings section for reference:

"ClusteredModeSettings": {

"PlatformId": "thingworx1",

"CoordinatorHosts": "zookeeper1:2181,zookeeper2:2181,zookeeper3:2181",

"ModelSyncPollInterval": 100,

"ModelSyncWaitPeriod": 3000,

"ModelSyncTimeout": 120000,

"ModelSyncMaxDBUnavailableErrors": 10,

"ModelSyncMaxCacheUnavailableErrors": 10,

"CoordinatorMaxRetries": 3,

"CoordinatorSessionTimeout": 90000,

"CoordinatorConnectionTimeout": 10000,

"MetricsCacheFrequency": 60000,

"IgnoreInactiveInterfaces": true,

"IgnoreVirtualInterfaces": true

},