How to interpret Predictive Scoring & Important Field Weights

Hello how are you community, I hope very well.

I want to share with you a question about Thingworx Analytics, specifically about how to use the Predictive Scoring option available in Analytics Builder and interpret its results. I finished the learning path on "Vehicle Predictive Pre-Failure Detection with ThingWorx Platform", which helped me to understand several concepts about Thingworx Analytics, managing to generate predictions for values in "real time".

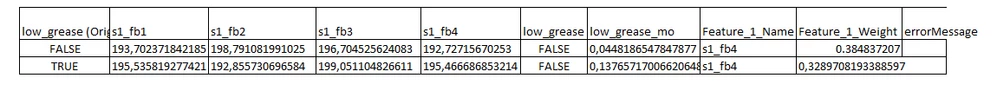

I would like to complement the predictions obtained with uncertainty probability or other practical information. Unfortunately, this guide does not cover topics that complement the predictions with information such as Predictive Scoring or confidence modeling. For my part I wanted to try and used the data and the model created to perform Predictive Scoring tests obtaining successful results but without knowing how to give a practical meaning to the Important Field Weights. On the other hand, according to the ThingWorx Analytics 9 documentation, the confidence models (which provide a probability of uncertainty about the prediction) are only available for continuous or ordinal data.

So I would like to know if there is extra information with which I can complement the predictions for the example "Vehicle Predictive Pre-Failure Detection with ThingWorx Platform", and how I could interpret the Important Field Weights.

At the end of the text I attach an image with 2 predictive scoring results and Important Field Weights (Feature Weigth).

Thank you for reading.