ThingWorx Analytics Creating New Predictive Model - Constant RUNNING status

Hello All,

I am facing the problem with Creating New Predictive Model process.

Background:

- Local ThingWorx H2 8.0.4 (port 8080)

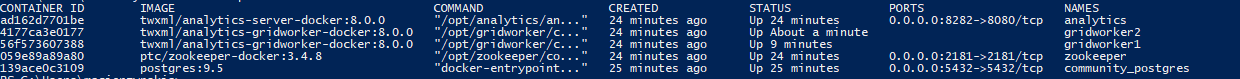

- Local Analytics Server 8.0.0 (port 8282) (DOCKER)

- 2 x gridworker 8GB RAM, tomcat 8GB RAM

- UploadThing 1.3

- BeanPro Sample Data

Issue:

While trying to create new predictive model for sample data (52k rows, no filters) I am stuck on the RUNNING state.

Another Issue:

There is no option to look into the Gridworker logs, cause there is SLF4J conflict (multiple binding)

Part of the gridworker log:

| /opt/gridworker/config/startup.sh: line 13: | 11 Killed | $JAVA -Xmx$MEM -Dsun.jnu.encoding=UTF-8 -Dfile.encoding=UTF-8 -Duri-builder=uri-builder-standard.xml -Dneuron.workerLogHome=$LOG -Dneuron.logHost=$LOG -Dzk_connect=$ZK_CONNECT -Dtransfer_uri=file:/ |

/${APP_TRANSFER_URI}/ -Daws.accessKeyId=$ACCESS_KEY -Daws.secretKey=$SECRET_KEY -Dcontainer=worker-container.xml -Denvironment=$ENVIRONMENT -Dspark-context=spark/spark-local-context.xml -cp `ls ${APP_BIN}/*.jar | xargs | sed -e "s/ /:/g"`:/opt/spark/lib/spark-assembly-1.

4.0-hadoop2.4.0.jar com.coldlight.ccc.job.dempsy.DempsyClusterJobExecutor

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/gridworker/bin/slf4j-log4j12-1.7.12.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/spark/lib/spark-assembly-1.4.0-hadoop2.4.0.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

Docker Images: (Gridworkers are restarting every couple of minutes)

Any help where I should focus to find the issue?

Regards,

Adam